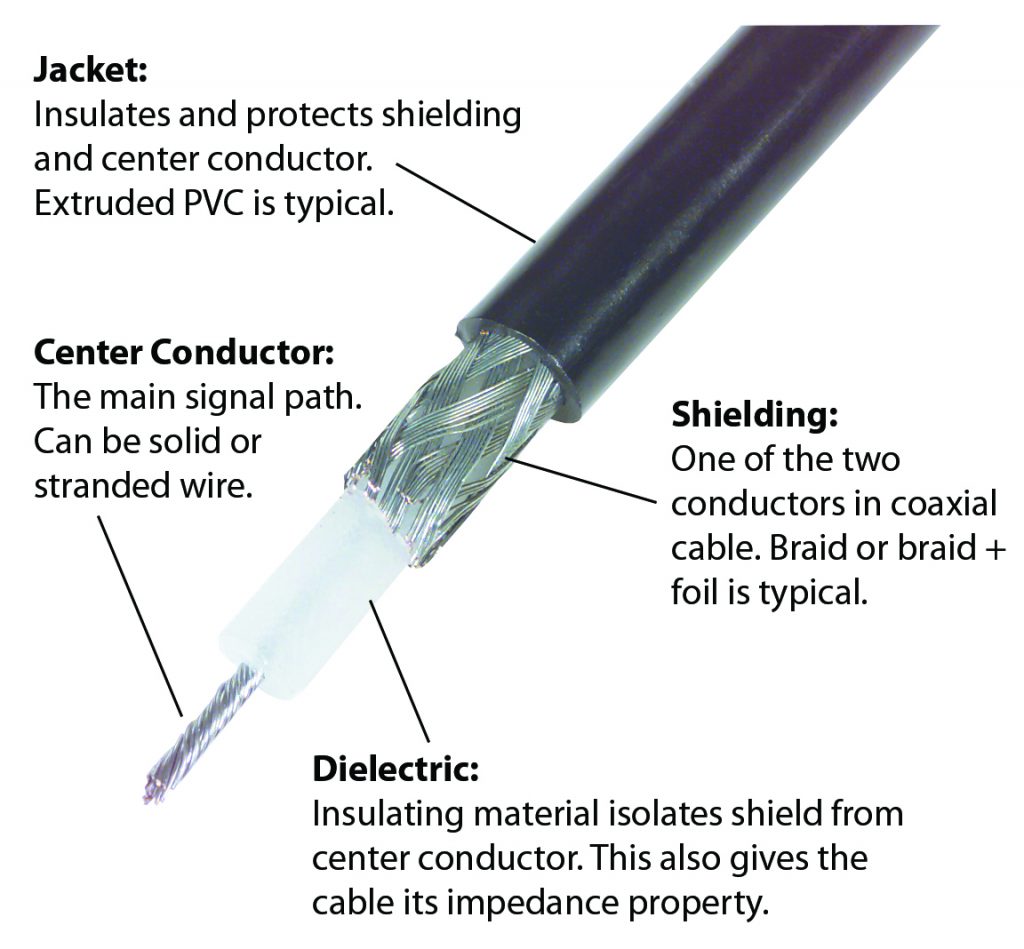

Coaxial cables are commonly known for being a broadband, relatively low-loss and high isolation transmission line technology. A coaxial cable is comprised of two concentric conductive cylinders separated by a dielectric spacer. The capacitance and inductance distributed along the coaxial line creates distributed impedance throughout the structure, known as characteristic impedance.

are commonly known for being a broadband, relatively low-loss and high isolation transmission line technology. A coaxial cable is comprised of two concentric conductive cylinders separated by a dielectric spacer. The capacitance and inductance distributed along the coaxial line creates distributed impedance throughout the structure, known as characteristic impedance.

The distributed resistive losses along the coaxial cable lead to predictable losses and behavior along the line. These factors enable coaxial cables to be used for transmitting electromagnetic (EM) energy with much less loss than using free space propagation of an antenna and less interference.

As a product of a coaxial cable having a conductive outer shield, additional material layers can be applied to the outside of a coaxial cable to increase environmental performance, EM shielding, and flexibility. Coaxial cables can be made of braided conductive strands and intelligently layered to create highly flexible and reconfigurable cabling that can be both lightweight and durable. As long as the concentricity of the conductive cylinders of the coaxial cable is maintained, bending and flexing will only marginally impact the cables performance. For this reason, coaxial cables are commonly connected with coaxial connectors using a screw type mechanism. The tightness control is done using torque wrenches.

There are a few important frequency dependant behaviors of coaxial lines that define their application potential—skin depth and the cutoff frequency. Skin depth describes a phenomenon that occurs for signals at higher frequency traveling along the coaxial line. The higher the frequency the more the electrons tend to migrate toward the surface of the conductors of a coaxial line. The skin effect leads to increased attenuation and dielectric heating, which leads to greater resistive loss along the coaxial line. In order to reduce the losses from the skin effect, a larger diameter coaxial cable can be used.

Though an apparently attractive solution to increase performance of coaxial technology, increasing the coaxial cables dimensions will reduce the maximum frequency the coaxial cable can transmit. The coaxial cable cut-off frequency is created when the size of the wavelength of EM energy exceeds the transverse electromagnetic (TEM) mode and begins to “bounce” along the coaxial line as a transverse electric 11 mode (TE11). The new frequency mode is troublesome, as it travels at a different velocity than the TEM mode and can cause reflections and interference to the TEM mode signals traveling through the coaxial cable.

The solution to this problem is to reduce the size of the coaxial cable to increase the cut-off frequency. There are now coaxial cables and coaxial connectors that can reach into the millimeter wave frequencies—1.85mm and 1mm coaxial connectors. Important to note, is that as the physical dimensions shrink to handle higher frequencies, the losses increase and the power handling capability of coaxial cables are reduced. Another challenge with building these extremely small components is to ensure that the mechanical tolerances are tight enough to reduce electrically significant imperfections and impedance changes along the line. This becomes an expensive process that leads to relatively sensitive cables.

Pasternack Blog

Pasternack Blog